The TOP500 authors encourage the HPC community to submit entries for the 55th TOP500 and Green500 lists. The June 2020 TOP500 list is released during the ISC High Performance conference, which will be held in digital form from June 22-24 due to the COVID-19 pandemic this year. The submission and publication schedule of the June list is NOT affected by this change.

On Nov. 20th, Beijing time (Nov. 19th Local Time)SC19 (The Supercomputing Conference 2019) was held in Denver, Colorado, USA. The theme of the conference is “HPC is now”. During the conference, well-recognized Hyperion Research issued Sugon the Innovation Excellence Award, due to its impressive innovations on “Silicon Cube” series computer. As reported, this is the first time that a Chinese local IT enterprise successfully gains the honor.

BERKELEY, Calif.; FRANKFURT, Germany; and KNOXVILLE, Tenn.— The 54th edition of the TOP500 saw China and the US maintaining their dominance of the list, albeit in different categories. Meanwhile, the aggregate performance of the 500 systems, based on the High Performance Linpack (HPL) benchmark, continues to rise and now sits at 1.65 exaflops. The entry level to the list has risen to 1.14 petaflops, up from 1.02 petaflops in the previous list in June 2019.

As of the most important international conferences in the field of high performance computing (HPC), The ISC High Performance conference (ISC 2019) kicked off at the Frankfurt Convention and Exhibition Center in Germany on June 16. Themed- Fueling Innovation - this conference highlighted the latest technologies and their applications in the fields of science and business. The conference attracted over 3500 engineers, IT experts, system developers, scientists from around the world.

BERKELEY, Calif.; FRANKFURT, Germany; and KNOXVILLE, Tenn.— The 53rd edition of the TOP500 marks a milestone in the 26-year history of the list. For the first time, all 500 systems deliver a petaflop or more on the High Performance Linpack (HPL) benchmark, with the entry level to the list now at 1.022 petaflops.

Happy New Year! We hope you took some time to enjoy the holidays.

We write with great reluctance to inform you that we will be discontinuing our news publication. Over the past two and half years your support and encouragement helped make TOP500 News one of the most widely read in our industry thank you!

On the brighter side, the TOP500 project will continue to broaden, as is already evident from the integration of the Green500 and High-Performance Conjugate Gradient (HPCG) benchmark data into the list. Considering what is now happening in the HPC application space with the rise of …

Episode 254: Addison Snell and Michael Feldman discuss the prospects for supercomputing architectures after exascale and Intel's plans, including the pursuit of 3-D memory technology.

A number of established trends in HPC continued to gather momentum in 2018. But there were also a few surprises. Let’s take a closer look at the more notable developments.

Over the next couple of years, Atos will be delivering two BullSequana supercomputers to the Finnish IT Center for Science (CSC) representing 11 peak petaflops of additional capacity.

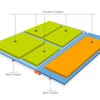

In what could be Intels most significant architectural advance in decades, the chipmaker unveiled a technology that will enable processors and other logic chips to be integrated into 3D packages.