Chinese researchers have developed a method to measure the intelligence quotient (IQ) of AI applications and found that Googles technology scored nearly as well as a human six-year old. The researchers also measured applications developed by Baidu, Microsoft and Apple, all of which fared less well.

The US Department of Energy is in the process of revamping the contract for the Aurora supercomputer, shifting its deployment from 2018 to 2021, and increasing its performance from 180 petaflops to over 1 exaflop. That will more than likely make it the first supercomputer in the US to leap over the exascale hurdle.

The worlds largest server OEMs announced they will be soon be shipping systems equipped with NVIDIAs latest Volta-generation V100 GPU accelerator. Included in this group are Hewlett Packard Enterprise (HPE), Dell EMC, IBM, Supermicro, Lenovo, Huawei, and Inspur, all of which took the opportunity to reveal their Volta-powered servers at this weeks GPU Technology Conference in China.

Intel Labs has developed a neuromorphic processor that researchers there believe can perform machine learning faster and more efficiently than that of conventional architectures like GPUs or CPUs. The new chip, codenamed Loihi, has been six years in the making.

Hyperion Research says 2016 was a banner year for sales of HPC servers. According to the analyst firm, HPC system sales reached $11.2 billion for the year, and is expected to grow more than 6 percent annually over the next five years. But it is the emerging sub-segment of artificial intelligence that will provide the highest growth rates during this period.

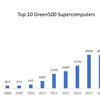

Over the last year, the greenest supercomputers in the world more than doubled their energy efficiency the biggest jump since the Green500 started ranking these systems more than a decade ago. If such a pace can be maintained, exascale supercomputers operating at less than 20 MW will be possible in as little as two years. But thats a big if.

If there was any question that machine learning would spawn chip designs aimed specifically at those applications, those doubts were laid to rest this year. The last 12 months have seen a veritable of explosion of silicon built for this new application space.

At the Hot Chips conference this week, Microsoft has revealed its latest deep learning acceleration platform, known as Project Brainwave, which the company claims can deliver real-time AI. The new platform uses Intel's latest Stratix 10 FPGAs.

While AI is poised to sweep through major sectors of the economy over the next decade, perhaps no industry should be more welcoming to this technology than that of healthcare. And given that the US is the most technologically advanced nation in the world, and the one with the most expensive healthcare, the country could end up being the proving ground for AI-powered medicine.

Microsoft has bought Cycle Computing, an established provider of cloud orchestration tools for high performance computing users. The acquisition offers the prospect of tighter integration between Microsoft Azures infrastructure and Cycles software, but suggests an uncertain future for the technology on Amazon Web Services (AWS) and Googles cloud platform.