Oct. 3, 2017

By: Michael Feldman

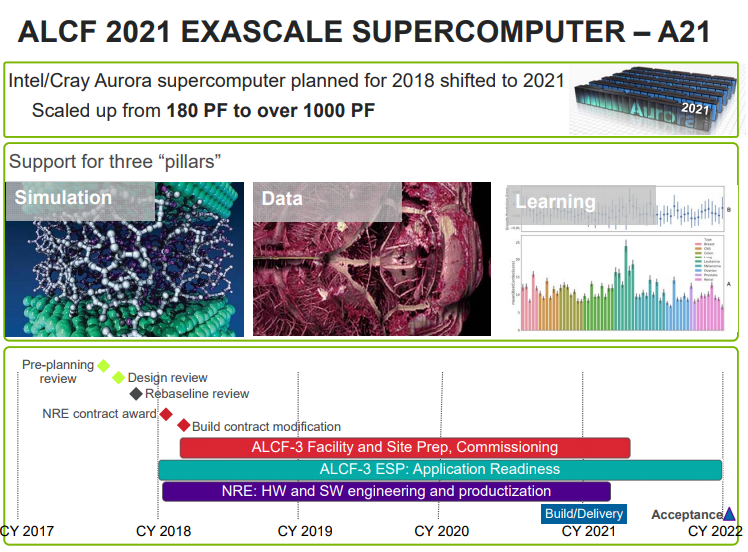

The US Department of Energy is in the process of revamping the contract for the Aurora supercomputer, shifting its deployment from 2018 to 2021, and increasing its performance from 180 petaflops to over 1 exaflop. That will more than likely make it the first supercomputer in the US to leap over the exascale hurdle.

The news was revealed in a presentation at last week’s meeting by the DOE’s Advanced Scientific Computing Advisory Committee. As illustrated by a slide made public during the meeting, construction of the machine will start at the end of 2020, with expected delivery in the first quarter of 2021 and full acceptance in early 2022. Also on the docket is site preparation at the Argonne Leadership Computing Facility ALCF), where the machine will be installed. Research and development for both the hardware and system software is scheduled to begin next year, an effort which will be funded by the DOE under a two-year NRE (non-recurring engineering) contract. An application readiness program will also take place during this timeframe.

If the proposed project is executed as expected, the new system will be the first exascale system for the US. In fact, the revamped Aurora looks to be the system that reflects the DOE’s recently updated plan to field an exascale supercomputer with a “novel architecture” in advance of more mainstream machines expected in the 2022-to-2023 timeframe. What qualifies as a novel architecture today is open to interpretation, and when you’re talking about leading-edge systems three to five years into the future, the term sort of loses its meaning. What is almost certain though, is that the system will not rely on the more typical CPU-GPU architecture, as do many current multi-petaflop supercomputers, as well as the DOE’s upcoming pre-exascale CORAL systems, Summit and Sierra.

Aurora was originally funded under the DOE's CORAL pre-exascale initiative and was slated for deployment at Argonne National Laboratory in 2018. That system was to be implemented as a Cray “Shasta” supercomputer equipped with Intel's third-generation“Knights Hill” Xeon Phi processors. It represented the architectural counter-balance to the Summit and Sierra systems, which will be powered by IBM Power9 CPUs and NVIDIA V100 GPUs. But something apparently went wrong with the Aurora work, and the Knights Hill chip looks like the prime suspect.

As of late, Intel has been quiet about the status of Knights Hill, a chip that is dependent on the deployment of the chipmaker's10nm process technology and the developement of the second-generation Ommi-Path interconnect. Given that Intel’s transition to 10nm silicon has been slowed significantly -- the first chips aren’t expected until next year – it’s reasonable to speculate that the whole project backed up due to this Moore’s Law slippage. Another plausible explanation is that Intel has been so focused on getting AI chips like Knights Mill and Lake Crest into the field, its HPC development efforts got shoved to the back burner. It's also possible that the development of the second-generation Omni-Path interconnect hit some sort of snag.

Presumably the Knights Hill roadmap straightens out at some point, but in any case that’s not likely to be chip to power the exascale version of Aurora. It will need a processor about five times as powerful and energy-efficienty as Knights Hill if the system designers want to keep the node count in the same general vicinity of the original machine (something over 50,000 nodes) and the power draw at around 13 MW. The successor to Knight Hill successor hasn’t been mentioned yet, but with the help of this proposed DOE funding, a two-year R&D window, and the prospect of 7nm process technology in 2020, Intel should be able to manage something that fits the bill.

The resulting processor will have to support a range of HPC workloads, which now include AI codes. There was a time not so long ago when supercomputers were only expected to run compute-intensive simulations, and if you were lucky data-intensive analytics. No more. All exascale systems are also expected to support deep learning workloads, which means the underlying architecture will have to support high levels of performance for single precision (32-bit), half precision (16-bit), and, optimally, quarter-word (8-bit) math. That shouldn’t be a problem for Intel, inasmuch as all Xeon Phi and Xeon processors going forward are expected to include support for 64/32/16/8-bit floating point and integer instruction, which is already available to a great extent in Intel’s latest Advance Vector Instructions (AVX-512) capability.

The DOE’s “rebaseline” review of the Aurora effort suggests other technologies will be included in the 2021 system as well. “The system as presented is exciting with many novel technology choices that can change the way computing is done,” says the rebaseline report. “The committee supports the bold strategy and innovation, which is required to meet the targets of exascale computing. The committee sees a credible path to success.”

What technologies they’re referring to here is not spelled out, but the likely candidates (besides the support for AI computing) are Intel’s silicon photonics and its 3D XPoint memory, both of which are poised to go mainstream in the next couple of years. Last year, Intel released its first silicon photonics products, offering the world’s first 100G transceivers using this technology. And this past May, the company demoed 3D XPoint memory modules, which could significantly expand memory capacity on exascale machinery. We’re also likely to see something new on the interconnect front, most likely a third-generation Omni-Path fabric.

The NRE contract award for the updated Aurora supercomputer is scheduled to be awarded early next year, and hopefully by then Intel, Cray, and Argonne National Lab will be able to provide more details on the machine, and what the effort will entail. Until then, we can hope that the second iteration of Aurora has a happier ending than the first.