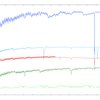

Ethernet remains the most popular interconnect on the TOP500 list, but InfiniBand still rules the roost when it comes to true supercomputing performance. We run the numbers and show how InfiniBand still dominates the top supercomputers in the world.

IBM today unveiled its first Power9-based server, the AC922, which the company is promoting as a platform for AI workload acceleration. The new dual-socket server was announced in conjunction with the official launch of the Power9 processor.

Oak Ridge National Laboratory (ORNL) recently acquired the Atos Quantum Learning Machine (QLM), a quantum computing simulator that lets researchers create qubit-friendly algorithms. The deployment is part of a larger effort at ORNL to develop quantum computing technologies at the US Department of Energy.

After largely ignoring the International Supercomputing Conference (ISC 2017) in Frankfurt this past June, AMD made good use of its time at SC17 in Denver last week to flesh out its high performance computing strategy and show off its latest EPYC CPUs and Radeon Instinct GPUs.

While vendors are busy announcing new HPC offerings at this weeks Supercomputing Conference (SC17), Intel announced it is removing its next-generation Knights Hill Xeon Phi product from its roadmap. And that might just be the beginning.

Summit, the most powerful supercomputer in the United States, is currently under construction at the US Department of Energys Oak Ridge National Lab (ORNL). ORNL director Thomas Zacharia updates us onits status and reveals the opportunities the new machine will provide scientists when it comes online next summer.

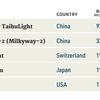

The fiftieth TOP500 list of the fastest supercomputers in the world has China overtaking the US in the total number of ranked systems by a margin of 202 to 143. It is the largest number of supercomputers China has ever claimed on the TOP500 ranking, with the US presence shrinking to its lowest level since the lists inception 25 years ago.

On Tuesday, November 14, attendees to this years Supercomputing Conference (SC17) keynote address will get an in-depth look into the Square Kilometre Array (SKA) Project, one of the most ambitious international research efforts ever undertaken. We talked with SKA principles Professor Phil Diamond and Dr. Rosie Bolton about what the project entails and what kinds of supercomputing resources that will be needed to drive the science.

Measuring high performance computing can be very powerful for the businesses that rely on it and the end users that directly employ it. Based on NAGs experience helping organizations with HPC measurement, we have put together this overview of the subject for TOP500 News.

Purchasing HPC hardware and software, as well as time spent developing and supporting applications, involve numerous options and trade-offs, meaning that making optimal decisions is far from easy. In a conversation with NAG's Andrew Jones, James Reinders describes what those options and trade-offs are and how managers can reach the best decisions on how to invest.