April 17, 2018

By: Michael Feldman

A trio of UK universities will install a set of HPE Apollo 70 clusters powered by Cavium’s ThunderX2 ARM processors. The effort is part of a three-year project, known as Catalyst UK, which is evaluating the potential of ARM-based supercomputing.

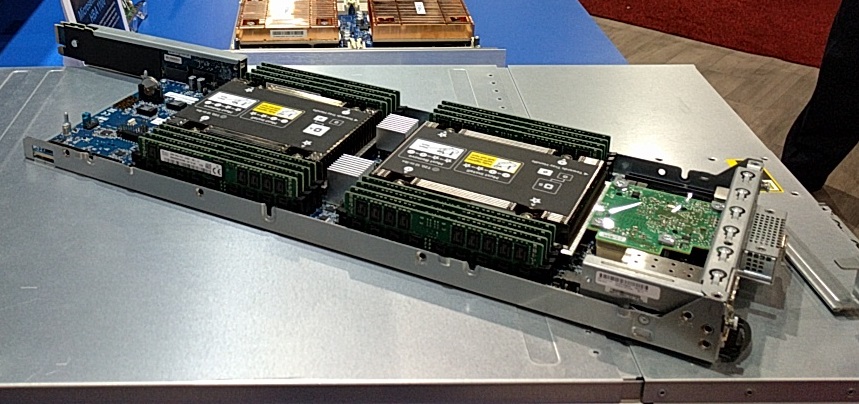

Apollo 70 server board on display at SC17

Apollo 70 server board on display at SC17

The three HPC systems will be installed at the Edinburgh Parallel Computing Centre (EPCC) at the University of Edinburgh, the University of Leicester, and the University of Bristol. The Bristol installation is particularly noteworthy since it will represent the second ARM-based cluster for the university. The first such system, named Isambard, was the world’s first production ARM-based supercomputer, a Cray-built CS400 machine funded by the Engineering and Physical Sciences Research Council (EPSRC).

In the case of Catalyst UK, the three HPE deployments will be used to explore the potential of ARM-based HPC for academic and industrial applications. Specifically, the participating universities will be investigating the feasibility of using these clusters for running workloads like AI and other HPC codes that demand a lot of memory bandwidth. The project will also be evaluating these systems as a model for future exascale supercomputers.

"Today's announcement marks a major step forward in boosting collaboration between the government and business to harness the power of innovation in supercomputing and AI,” said Sam Gyimah MP, Science Minister. “Through our modern Industrial Strategy, AI Grand Challenge and upcoming Sector Deal, the UK will lead the AI and data revolution. Doing so has the potential to increase the UK’s competitiveness in emerging industries around the world, grow our economy and create the high value jobs we need to build a Britain fit for the future.”

According to HPE, the three systems will have the same basic configuration, consisting of 64 Apollo 70 nodes, each one of which will be equipped with two 32-core Cavium ThunderX2 processors and 128 GB of DDR4 memory. The 64-node clusters will use Mellanox InfiniBand as the system interconnect. Each system is expected to take up two computer racks and consume 30KW of power. The systems will employ SUSE Linux as the OS.

All told, each 4096-core cluster will deliver about 72 peak teraflops, according to numbers supplied to us by Mike Vildibill, VP, Advanced Technologies Group at HPE. That’s not a big system by today’s standards, nor is it particularly impressive with respect to flops/watt; Xeon Skylake-based clusters are about 50 percent more efficient in that regard.

These days though, the more important HPC metric is often memory performance, since this is the bottleneck for most data-demanding codes (such as applications dealing with sparse matrix structures). According to Vildibill, each of these Apollo 70 nodes delivers 240 GB/second of memory bandwidth on the STREAM benchmark. That’s a good deal better than comparable systems powered by AMD EPYC or Xeon Skylake processors, at least according to recent testing performed by the gang at AnandTech. Even more impressive is the fact that a ThunderX2-powered Apollo node draws only about 469 watts, which is less than half the power consumption of comparable Xeon or EPYC servers.

With this announcement, the UK appears to be setting itself up to be the proving ground for ARM-flavored HPC, especially with regard to developing applications and other software for the ecosystem. That’s not entirely surprising given that ARM Holdings is a British enterprise (notwithstanding its current ownership by Japanese multinational SoftBank). That said, ARM-based systems are also being trialed in conjunction with the EU’s Mont-Blanc Project using these same ThunderX2 chips in a Bull sequana machine. And in the most ambitious use of ARM technology to date, RIKEN and Fujitsu will employ a vector-enhanced ARM variant, known as ARMv8-A SVE, for the Japan’s future Post-K exascale supercomputer.

A handful of HPC customers in the US are also starting to warm up to ARM. Los Alamos National Lab has been experimenting with the technology for at least a year using a Raspberry Pi cluster, and with Cray’s recent announcement of an ARM option on the XC50, the company revealed they have Department of Energy customers looking at these systems. (The DOE, by the way, was interested enough in ARM to award Cray some FastForward 2 funding to help develop this platform.) Finally, Argonne National Lab signed a deal last year with HPE to build a 32-node ThunderX2 machine for evaluating the technology. That system is almost certainly another Apollo 70 cluster.

The three Apollo 70 clusters going to the UK are scheduled to be installed sometime this summer. Vildibill says HPE has other HPC customers in the pipeline as well, but they’re unable to disclose their names at this time. Stay tuned.