May 26, 2017

By: Michael Feldman

The lack of memory capacity in traditional computer clusters is a significant limitation to application performance in the datacenter. A new memory disaggregation technology developed at the University of Michigan is designed to help alleviate this critical obstacle.

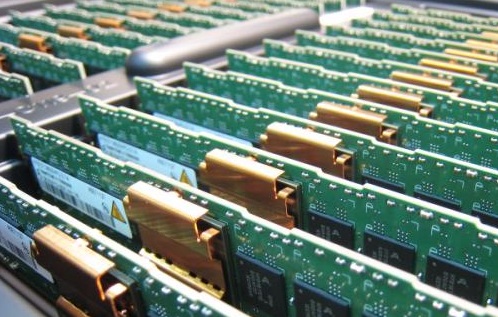

The problem is common to many datacenter environments, from HPC clusters to cloud server farms. In general, DRAM capacity is often a limiting factor in these scaled-out systems since compute power has leapt ahead of memory advancements. Even in cases where the total amount of memory across the entire system is adequate, local demands may overwhelm (or underwhelm) particular servers.

The problem is common to many datacenter environments, from HPC clusters to cloud server farms. In general, DRAM capacity is often a limiting factor in these scaled-out systems since compute power has leapt ahead of memory advancements. Even in cases where the total amount of memory across the entire system is adequate, local demands may overwhelm (or underwhelm) particular servers.

Researchers at the University of Michigan think they have found an answer, at least for clusters whose networks support Remote Direct Memory Access (RMDA). They claim that the software they have developed, known as Infiniswap, can “boost the memory utilization in a cluster by up to 47 percent, which can lead to financial savings of up to 27 percent.”

In a nutshell, the software tracks memory utilization across the cluster, and when a server runs out of memory, it borrows memory from other servers with spare capacity. Although remote memory access is going to be slower than accessing local memory, it’s going to be much faster than swapping the data out to disk, the usual method used to free up extra space.

According to the researchers, the Infiniswap software that does this is itself distributed, so can scale with the size of the cluster. Other than network gear that supports RDMA, Infiniswap isn’t dependent on any particular type of hardware and doesn’t require modifications to the applications.

The researchers tested Infiniswap on a 32-node cluster with typical workloads that stress the memory subsystem, specifically “data-intensive applications that ranged from in-memory databases such as VoltDB and Memcached to popular big data software Apache Spark, PowerGraph and GraphX.” They found that the software improved throughput by 4 to 16 times, and tail latency (the speed of the slowest operation) by a factor of 61.

According to project lead Mosharaf Chowdhury, Infiniswap wouldn’t be practical without today’s faster networks. "Now, we have reached the point where most data centers are deploying low-latency RDMA networks of the type previously only available in supercomputing environments," he explained.

Infiniswap is open source and is available in the GitHub repository. A paper providing a detailed description of the software and how it works is available here.