Oct. 31, 2017

By: Michael Feldman

The National Computational Infrastructure (NCI) in Canberra has integrated IBM Power8 nodes into its Raijin petascale supercomputer.

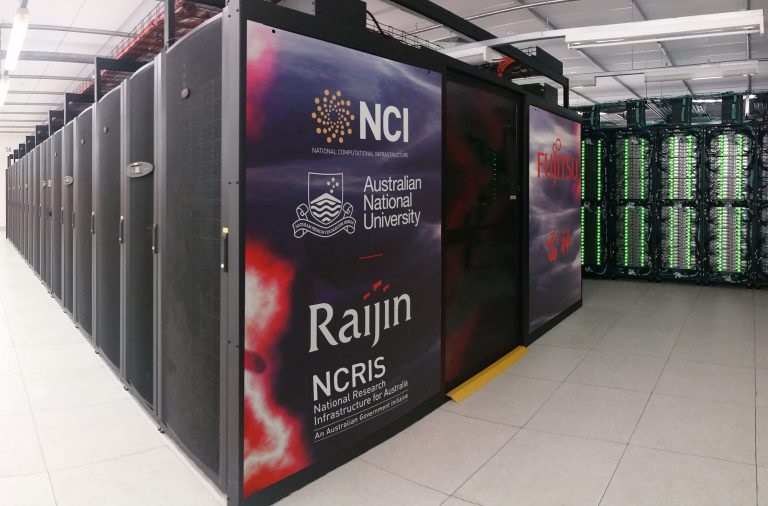

Raijin supercomputer. Source: National Computational Infrastructure (NCI)

Raijin supercomputer. Source: National Computational Infrastructure (NCI)

IBM did not specify the extent of the Power8 server upgrade, and NCI’s Raijin website has not been updated to reflect the integrated nodes. What is known is that even before the IBM nodes were added, Raijin was the speediest system in Australia, not to mention the Southern Hemisphere. Pre-Power8, Raijin delivered a peak performance of 1.875 petaflops and a Linpack mark of 1.676 petaflops. The base system is a mishmash of Fujitsu PRIMERGY and Lenovo nx360 M5 nodes, and is equipped with Intel “Broadwell” Xeon and Xeon Phi processors, as well as NVIDIA Tesla P100 GPU accelerators.

But it’s not the flops IBM is touting for the new Power nodes, it’s the memory speeds they can deliver. A dual-socket Power8 server can provide up to 384 GB/second of memory bandwidth, which is more than double that of the “Broadwell” Xeon servers in Raijin. Dave Turek, Vice President, HPC and OpenPower, IBM Systems, says the superior bandwidth of the new Power8 nodes will be key to accelerating codes on the upgraded supercomputer. “For now, NCI researchers are utilizing these nodes because the extraordinary memory bandwidth by itself provides significant performance advantage for some of their applications,” said Turek.

According to IBM’s press release, NCI has been busy porting a number of memory-intensive application kernels to the Power8 platform and has already demonstrated some performance advantages over the x86 hardware running those codes. For example, a Power-optimized version of Q-Chem, a quantum chemistry package, outran the same software running on the Xeon “Broadwell” x86 nodes. NCI also optimized the NAMD molecular dynamics code for the Power platform, and realized similar performance gains. In addition, initial benchmarks of a Power-based implementation of MILC, a MIMD Lattice Computation package, also ran slightly faster than the corresponding code on x86 hardware.

Turek says the superior memory bandwidth will also provide “the opportunity for those researchers to explore the intersection of AI and HPC across a wide range of scientific applications.” In particular, the ability to use machine learning to steer HPC simulations and improve the interpretation of their results, offers a real opportunity to improve the advance HPC capabilities, according to Turek.

At the upcoming SC17 conference in Denver, no doubt IBM will be talking up its Power servers and its strategy to make this platform suitable for HPC/AI-blended workloads. IBM’s Power8 and upcoming Power9 CPUs are the only commercial processors that can natively support NVIDIA’s NVLink interconnect for speeding CPU-to-GPU communications, and IBM is leveraging that capability as it continues to port over the most popular machine learning frameworks. It’s an approach that IBM believes gives it an edge against its competitors, and one it will continue to promote at every opportunity.