May 8, 2017

By: Michael Feldman

As NVIDIA’s GPU Technology Conference (GTC) kicks off this week in San Jose, California, vendors are lining up to announce their latest GPU computing wares. Even before the main conference festivities commenced, Supermicro, Inspur, and Boston Limited took the opportunity to launch their new NVIDIA Tesla P100 servers.

P100-powered servers are nothing new, of course. Nowadays just about every major OEM and system provider has some sort of platform based on NVIDIA’s latest and greatest GPU, and thanks to the rise of deep learning, many of these solutions have crammed four, eight, or more of these P100s into a single enclosure. For training neural networks, it’s hard to have too many GPUs, and the P100 is especially good at this task thanks to its support for single precision (FP32) and half precision (FP16) math. That said, an increasing number of more traditional HPC codes can also make use of multiple GPUs.

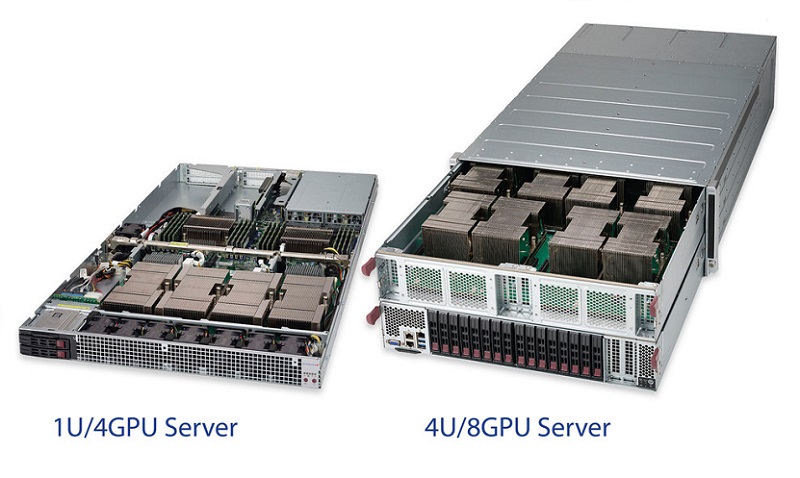

Supermicro, for example, has come up with a trio of new servers with either four or eight of the P100s. The company is aiming these new offerings at HPC, advanced analytics, and AI workloads.

Supermicro, for example, has come up with a trio of new servers with either four or eight of the P100s. The company is aiming these new offerings at HPC, advanced analytics, and AI workloads.

The 4028GR-TXR server is Supermicro’s eight-GPU solution, a 4U box that offers 170 teraflops of FP16, based on the P100 hardware. The design takes advantage of NVIDIA’s NVLink interconnect by gluing together the eight GPUs in a cube mesh architecture. That enables the GPUs to talk with one another at 80 GB/second in RDMA fashion, without the need to go through the CPU host, which in this case is an Intel Xeon Broadwell processor.

Supermicro also announced a new P100-friendly 1U server, the 1028GQ-TXT, which supports up to four of the GPUs in a minimum amount of rack space. Once again, NVLink is used for GPU-to-GPU communication for RDMA conversations. For a more CPU-heavy configuration, the company is also now offering the 2028TP-DTFR, a dual-node 2U server, that can house two P100s per node.

Finally, if you’re interested in keeping all your GPUs for yourself, Supermicro is also selling a quad-P100 workstation in a 4U tower form factor. This product is aimed primarily at digital content creators, although someone could just as easily use it for small-scale HPC or deep learning work.

Not to be outdone, Inspur now has its own high-density P100 server, which is known as the AGX-2. This one puts up to eight of the NVIDIA GPUs in a 2U box, which conveniently comes with the option of air-liquid cooling. The AGX-2 also comes equipped with NVLink 2.0 technology, presumably to support the next-generation Volta GPUs that will feature this second-generation NVIDIA interconnect.

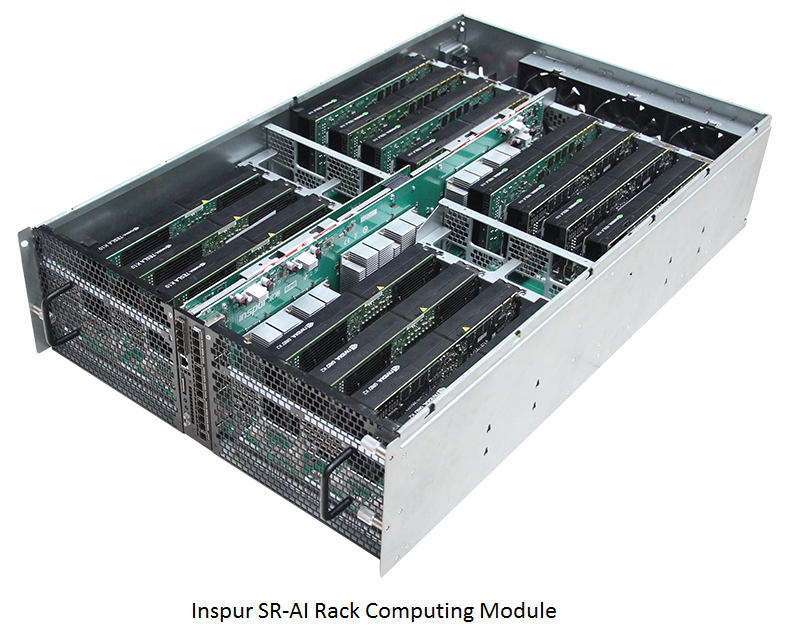

Besides the AGX-2, Inspur and Baidu have jointly announced the SR-AI Rack, a solution that puts the GPUs into a separate enclosure so that they can be scaled independently from their host CPUs. A PCIe switch is used to connect the CPU server or servers to one or more of the SR-AI Rack modules. A single SR-AI module can house up to 16 NVIDIA Tesla GPUs, and up to four modules can be daisy-chained together via the PCIe switch. Such a setup would put more than a petaflop of FP16 performance or over half a petaflop of FP32 performance in an exceeding small footprint. GPUs in the cluster are directly linked with one another via a 100G RDMA interconnect, which is presumably based on EDR InfiniBand technology.

Besides the AGX-2, Inspur and Baidu have jointly announced the SR-AI Rack, a solution that puts the GPUs into a separate enclosure so that they can be scaled independently from their host CPUs. A PCIe switch is used to connect the CPU server or servers to one or more of the SR-AI Rack modules. A single SR-AI module can house up to 16 NVIDIA Tesla GPUs, and up to four modules can be daisy-chained together via the PCIe switch. Such a setup would put more than a petaflop of FP16 performance or over half a petaflop of FP32 performance in an exceeding small footprint. GPUs in the cluster are directly linked with one another via a 100G RDMA interconnect, which is presumably based on EDR InfiniBand technology.

Finally, Inspur is also offering a blade server for deep learning work. The NX5460M4 HPC platform can now be configured with up to 16 GPUs spread across eight nodes in a 12U space.

All of these servers are aimed the commercial AI market. According to Inspur, it accounts for more than 60 percent of the AI server market in China, and partners with some the biggest internet companies there. These include such giants as Baidu, Alibaba, and Tencent, as well as smaller players like iFLYTEK, Qihoo 360, Sogou, Toutiao, and Face++.

Boston Limited is also using GTC as a launch point for its new Anna Pascal XL, a 4U server featuring up to eight P100 GPUs. It has four PCIe slots for InfiniBand, so GPUs in adjoining servers can converse using RDMA. This is not Boston’s first P100 offering. At ISC16 last year, they announced XL’s predecessor, the Anna Pascal, a 1U server with four P100 GPUs. As with the original machine, the company is targeting both HPC and deep learning users with its newest offering.

All three company will be on-hand this week at GPU Technology Conference, talking about their new servers, along with innumerable other vendors hawking their latest GPU-powered hardware and software. This year promises to be the biggest GTC ever, with an expected crowd of 8,000 attendees and 150 exhibitors. The four-day event will offer more than 500 speaking sessions, which for reasons that are now obvious to anyone familiar with NVIDIA, will focus heavily on artificial intelligence and related areas.

The main conference proceedings begin on Tuesday, May 9 and will continue through Thursday, May 11. NVIDIA founder and CEO Jensen Huang will deliver a keynote on Wednesday, May 10, which we expect will contain some news of the upcoming Volta GPUs. Stay tuned…