June 8, 2018

By: Michael Feldman

The Department of Energy’s 200-petaflop Summit supercomputer is now in operation at Oak Ridge National Laboratory (ORNL). The new system is being touted as “the most powerful and smartest machine in the world.”

And unless the Chinese pull off some sort of surprise this month, the new system will vault the US back into first place on the TOP500 list when the new rankings are announced in a couple of weeks. Although the DOE has not revealed Summit’s Linpack result as of yet, the system’s 200-plus-petaflop peak number will surely be enough to outrun the 93-petaflop Linpack mark of the current TOP500 champ, China’s Sunway TaihuLight.

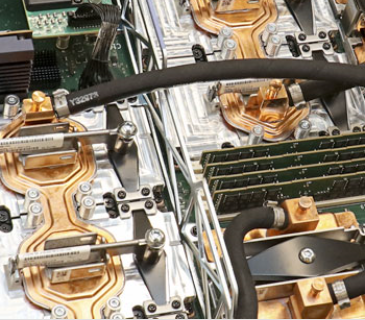

Even though the general specifications for Summit have been known for some time, it’s worth recapping them here: The IBM-built system is comprised of 4,608 nodes, each one housing two Power9 CPUs and six NVIDIA Tesla V100 GPUs. The nodes are hooked together with a Mellanox dual-rail EDR InfiniBand network, delivering 200 Gbps to each server.

Even though the general specifications for Summit have been known for some time, it’s worth recapping them here: The IBM-built system is comprised of 4,608 nodes, each one housing two Power9 CPUs and six NVIDIA Tesla V100 GPUs. The nodes are hooked together with a Mellanox dual-rail EDR InfiniBand network, delivering 200 Gbps to each server.

Assuming all those nodes are fully equipped, the GPUs alone will provide 215 peak petaflops at double precision. Also, since each V100 also delivers 125 teraflops of mixed precision, Tensor Core operations, the system’s peak rating for deep learning performance is something on the order of 3.3 exaflops.

Those exaflops are not just theoretical either. According to ORNL director Thomas Zacharia, even before the machine was fully built, researchers had run a comparative genomics code at 1.88 exaflops using the Tensor Core capability of the GPUs. The application was rummaging through genomes looking for patterns indicative of certain conditions. “This is the first time anyone has broken the exascale barrier,” noted Zacharia.

Of course, Summit will also support the standard array of science codes the DOE is most interested in, especially those having to do with things like fusion energy, alternative energy sources, material science, climate studies, computational chemistry, and cosmology. But since this is an open science system available to all sorts of research that frankly has nothing to do with energy, Summit will also be used for healthcare applications in areas such as drug discovery, cancer studies, addiction, and research into other types of diseases. In fact, at the press conference announcing the system’s launch, Zacharia expressed his desire for Oak Ridge to be “the CERN for healthcare data analytics.”

The analytics aspect dovetails nicely with Summit’s deep learning propensities, inasmuch as the former is really just a superset of the latter. When the DOE first contracted for the system back in 2014, the agency probably only had a rough idea of what they would be getting AI-wise. Although IBM had been touting its data-centric approach to supercomputing prior to pitching its Power9-GPU platform to the DOE, the AI/machine learning application space was in its early stages. Because NVIDIA made the decision to integrate the specialized Tensor Cores into the V100, Summit ended up being an AI behemoth, as well as a powerhouse HPC machine.

As a result, the system is likely to be engaged in a lot of cutting-edge AI research, in addition to its HPC duties. For the time being, Summit will only be open to select projects as it goes through its acceptance process. In 2019, the system will become more widely available, including its use in the Innovative and Novel Computational Impact on Theory and Experiment (INCITE) program.

At that point, Summit’s predecessor, the Titan supercomputer, is likely to be decommissioned. Summit has about eight times the performance of Titan, with five times better energy efficiency. When Oak Ridge installed Titan in 2012, it was the most powerful system in the world and is still fastest supercomputer in the US (well, now the second-fastest). Titan has NVIDIA GPUs too, but these are K20X graphics processors and their machine learning capacity are limited to four single precision teraflops per device. Fortunately, all the GPU-enabled HPC codes developed for Titan should port over to Summit pretty easily and should be able to take advantage of the much greater computational horsepower of the V100.

For IBM, Summit represents a great opportunity to showcase its Power9-GPU AC922 server to other potential HPC and enterprise customers. At this point, the company’s principle success with its Power9 servers has been with systems sold to enterprise and cloud clients, but generally without GPU accelerators. IBM’s only other big win for its Power9/GPU product is the identically configured Sierra supercomputer being installed at Lawrence Livermore National Lab. The company seems to think its biggest opportunity with its V100-equipped server is with enterprise customers looking to use GPUs for database acceleration or developing deep learning applications in-house.

For IBM, Summit represents a great opportunity to showcase its Power9-GPU AC922 server to other potential HPC and enterprise customers. At this point, the company’s principle success with its Power9 servers has been with systems sold to enterprise and cloud clients, but generally without GPU accelerators. IBM’s only other big win for its Power9/GPU product is the identically configured Sierra supercomputer being installed at Lawrence Livermore National Lab. The company seems to think its biggest opportunity with its V100-equipped server is with enterprise customers looking to use GPUs for database acceleration or developing deep learning applications in-house.

Summit will also fulfill another important role – that of a development platform for exascale science applications. As the last petascale system at Oak Ridge, the 200-petaflop machine will be a stepping stone for a bunch of HPC codes moving to exascale machinery over the next few years. And now with Summit up and running, that doesn’t seem like such a far-off prospect. “After all, it’s just 5X from where we are,” laughed Zacharia.

Top image: Summit supercomputer; Bottom image: Interior view of node. Credit: ORNL