Oct. 26, 2017

By: Michael Feldman

NEC Corporation has launched SX-Aurora TSUBASA, the company’s latest vector-based product line for high performance computing.

The new platform is aimed at traditional HPC application in science and engineering, but according the company, will also target AI, machine learning, and the broader field of “big data” analytics. Like other HPC vendors, NEC is hoping this wider range of applications expands the customer base for these systems.

The new platform is aimed at traditional HPC application in science and engineering, but according the company, will also target AI, machine learning, and the broader field of “big data” analytics. Like other HPC vendors, NEC is hoping this wider range of applications expands the customer base for these systems.

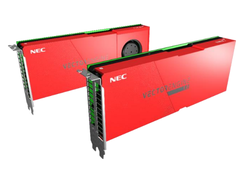

Like previous SX machinery, the TSUBASA platform derives its computational horsepower from NEC’s vector processor technology, but unlike previous versions, the vector chip has been relegated to a discrete PCIe coprocessor card, known as the Vector Engine. The server’s host CPU will be an x86 processor, which NEC is referring to as “the Vector Host”. It is intended to provide frontend housekeeping for the Vector Engine – things like MPI management, I/O, OS calls, and other kinds scalar processing. The computational heavy lifting is expected to be done entirely on the vector hardware.

The hybrid host-accelerator architecture mirrors an industry trend toward coprocessor offloading, popularized by NVIDIA and its Tesla GPU product line. NEC, though, is claiming that unlike GPUs, its Vector Engine can execute complete applications, thus removing a principle communication bottleneck between the host and the accelerator. Whether that turns out to be the case or not remains to be seen, but given that the Vector Engine is a complete CPU in its own right, it’s likely to exhibit more versatility than a graphics chip.

The Vector Engine is an 8-core processor, which NEC says will deliver 2.45 teraflops, or about five times the performance of the vector chip in its previous generation SX-ACE system. Like the latest server GPUs from NVIDIA and AMD, the Vector Engine will use second-generation High Bandwidth Memory (HBM2) for extra-speedy data access. Aggregate memory bandwidth across the six HBM2 modules is said to be 1.2 TB/second.

TSUBASA servers may be equipped with 1, 2, 4 or 8 Vector Engine cards, with up to 64 of the cards in a rack. The latter is their "supercomputer model" (pictured above) which maxes out the computational density. NEC says customers can scale these out into arbitrarily large systems.

Compared to NVIDIA’s best accelerator, the 7.8 teraflop, 900 GB/second V100 GPU, the Vector Engine has about a 31 percent of the peak performance of its GPU counterpart, but about a 33 percent better memory bandwidth. Application performance may not reflect those differences, but for memory-bound codes, in particular, the Vector Engine could very well deliver better results, given the better flops/bytes ratio.

Compared to NVIDIA’s best accelerator, the 7.8 teraflop, 900 GB/second V100 GPU, the Vector Engine has about a 31 percent of the peak performance of its GPU counterpart, but about a 33 percent better memory bandwidth. Application performance may not reflect those differences, but for memory-bound codes, in particular, the Vector Engine could very well deliver better results, given the better flops/bytes ratio.

Because of the proprietary nature of the vector processor and the system design, users will have to rely on NEC for things like compilers, MPI libraries, and other system software. The company is also offering its own distributed file system (NEC Scalable Technology File System) and job scheduler (NEC Network Queuing System V). No word yet on what machine learning and data analytics frameworks have been ported over to the platform. On the vector side at least, the company is promising binary compatibility with previous-generation SX systems.

If you’re interested in learning more about the new platform, NEC will be exhibiting the SX-Aurora TSUBASA at the upcoming supercomputing conference, SC17, in Denver, Colorado, from November 13 to 16.

Images courtesy of NEC Corporation