Aug. 15, 2017

By: Michael Feldman

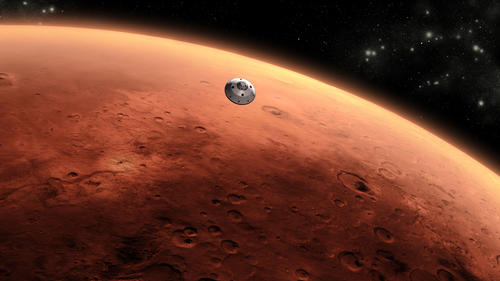

Using off-the-shelf servers, NASA and HPE are devising a way to allow a Mars-bound spacecraft to house an on-board supercomputer.

To test the concept, NASA has launched the SpaceX CRS-12 rocket containing HPE’s “Spaceborne Computer” as its payload. According the company, the servers that make up the system are of the same type that power Pleiades, NASA’s flagship 7-petaflop supercomputer housed at the Ames Research Center in Mountain View, California. Pleiades is currently the 15th most powerful system in the world, according to the latest TOP500 rankings

To test the concept, NASA has launched the SpaceX CRS-12 rocket containing HPE’s “Spaceborne Computer” as its payload. According the company, the servers that make up the system are of the same type that power Pleiades, NASA’s flagship 7-petaflop supercomputer housed at the Ames Research Center in Mountain View, California. Pleiades is currently the 15th most powerful system in the world, according to the latest TOP500 rankings

The Spaceborne Computer will be deposited at the International Space Station (ISS), where it be part of a year-long experiment to find out how regular commodity servers can operate in the harsh conditions of outer space. Its one-year operation is designed to match the time it would take for a spacecraft to travel from the Earth to Mars and back.

During that time, it will run a series of supercomputing benchmarks, including High Performance Linpack, the High Performance Conjugate Gradients (HPCG) suite, and the NASA-derived NAS parallel benchmarks. Its operation will be compared to HPE servers of the same construction back on Earth. The idea is to make sure that the ISS-based system is able to deal with the realities of cosmic radiation, solar flares, unstable electrical power, and wide variations in temperature.

Instead of hardening the server components to deal with these hazards, which would add extra weight and require more space, HPE developed customized software that would dynamically throttle the system when the environment became unfavorable and would correct for errors common under harsh conditions. The only hardware mitigation is a water cooling system to prevent overheating, which will take advantage of the frigid temperatures in the vacuum of space. It’s not a foregone conclusion that all of this will work, inasmuch as no one has ever attempted to operate off-the-shelf servers in outer space before.

The reason NASA is going to all this trouble is that the mission to Mars is going to require more real-time computational power than can be had from a traditional spacecraft setup. Eng Lim Goh, the principal investigator on the Spaceborne Computer project, describes the problem thusly:

“Mars astronauts won’t have near-instant access to high performance computing like those in low-earth orbit do — on average, the red planet is 26 light minutes round-trip away. Imagine waiting that long to get critical answers during a system failure; that simply isn’t an option. Having a supercomputer on board the spacecraft will allow our interplanetary explorers to meet some of these challenges in real time -- whether it be on-the-spot processing power for scalable simulation, analytics or artificial intelligence.”

HPE is also using the NASA news to tout its Memory-Driven Computing architecture. The company released a working prototype of such a system in May, which housed 160 TB of shared memory across its 40 nodes. Although the Spaceborne Computer is not of such a design, the company thinks this kind of architecture will be the basis of the supercomputer that goes to Mars. The reasoning behind that assertion is that conventional systems will be too big and power-hungry to be practical for a flying datacenter.

For more background on this news, albeit with a generous helping of hyperbole, read the HPE Q&A with Eng Lim Goh about the Spaceborne Computer, as well as Kirk Breniker’s blog post on the relevance of Memory-Driven Computing to the Mars mission.