Feb. 23, 2017

By: Michael Feldman

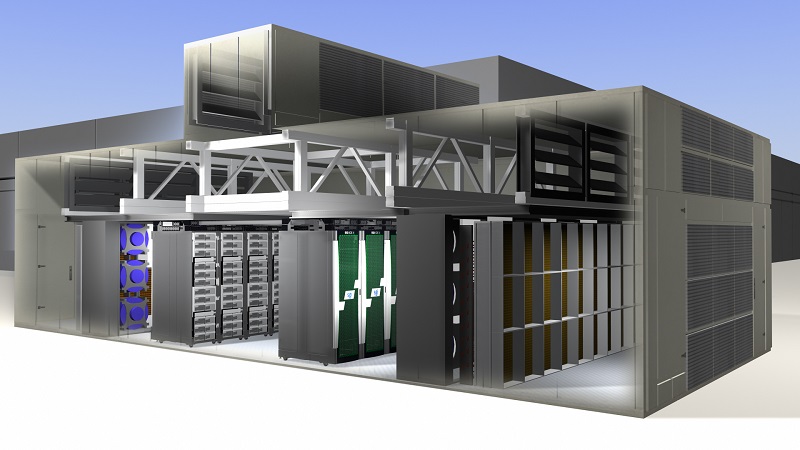

The National Aeronautics and Space Administration (NASA) has built a new modular supercomputing facility at its Ames Research Center in Silicon Valley that could be the template for future HPC infrastructure at the agency.

The new facility will house Electra, a relatively new SGI ICE X cluster will offload some of the work from Pleiades, NASA’s top supercomputer. Thanks to the advanced design of the new facility, NASA is expecting to save about 1,300,000 gallons of water and a million kilowatt-hours of energy each year over the system's lifetime.

Electra debuted on the TOP500 rankings last November, turning in a Linpack result of 1.1 petaflop, which was enough to earn it the number 96 spot on the list. Pleiades is nearly six times as powerful, with a Linpack mark of over 5.9 petaflops. It represents a six-year amalgamation of multiple generations of SGI ICE servers, and now sits at number 13 on the TOP500 list.

Although Electra and Pleiades belong to the same pedigree, Electra is the more efficient machine (even putting aside the savings in water and cooling, which we’ll get to shortly). Electra has a Linpack energy efficiency rating of about 2.5 gigaflops per watt, which puts it at number 51 on the latest Green500 list. Pleiades, meanwhile, delivers just a bit over 1.3 gigaflops per watt and sits at number 180 on that list. That is most likely a consequence of Pleiades being an older system, made up of mix of Intel Xeon processors (E5-2670, E5-2680v2, E5-2680v3, E5-2680v4), most of which are less efficient than the newer chips. Electra is entirely powered by latest Broadwell Xeon parts (E5-2680v4), and is equipped with a somewhat smaller ratio of memory-to-compute compared to its larger sibling.

But a significant part of Electra’s efficiency is a result of how it’s cooled. Instead of using a traditional refrigeration system that uses a lot of electricity and water, Electra employs fan technology that is said to used less than 10 percent of the energy of mechanical refrigeration. Since cooling can amount to as much 50 percent of the power used by a supercomputer, those savings in electricity costs are significant.

The cooling system also has the advantage of using much less water than a refrigeration set-up, a feature which is especially important to drought-prone California, but could also be useful for general cost reduction, even where water is abundant.

According to Bill Thigpen, chief of the Advanced Computing Branch at Ames’ NASA Advanced Supercomputing (NAS) facility, he expects power and cost savings to be significant. But beyond that, Thigpen believes the agency has an obligation to pursue greener HPC. “One of NASA’s key science goals is to expand our knowledge of Earth systems,” he said. “So we have a responsibility to do our part to lessen the impact of our technologies on the environment over the long term.” In other words, if you're going to be studying climate change, you shouldn't be contributing to it.

Beyond all those nice power and cooling features, the modular nature of the infrastructure also makes maintenance and expansion more flexible, not to mention more cost-effective. Thigpen thinks this design can “save about $35 million – about half the cost of building another big facility.” NASA is already considering expanding the facility by as much as 16 times, just to keep up with demand from the agency’s research community.