March 9, 2017

By: Michael Feldman

It was only a matter of time until someone came up with an Open Compute Project (OCP) design for a GPU-only accelerator box for the datacenter. That time has come.

In this case though, it was two someones: Microsoft and Facebook. This week at the Open Compute Summit in Santa Clara, California, both hyperscalers announced different OCP designs for putting eight of NVIDIA’s Tesla P100 GPUs into a single chassis. Both fill the role of a GPU expansion box that can be paired with CPU-based servers in need of compute acceleration. The idea is to disaggregate the GPUs and CPUs in cloud environments so that users may flexibly mix these processors in different ratios, depending upon the demands of the particular workload.

The principle application target is machine learning, one of the P100’s major areas of expertise. An eight-GPU configuration of these devices will yield over 80 teraflops at single precision and over 160 teraflops at half precision.

Source: Microsoft

Source: Microsoft

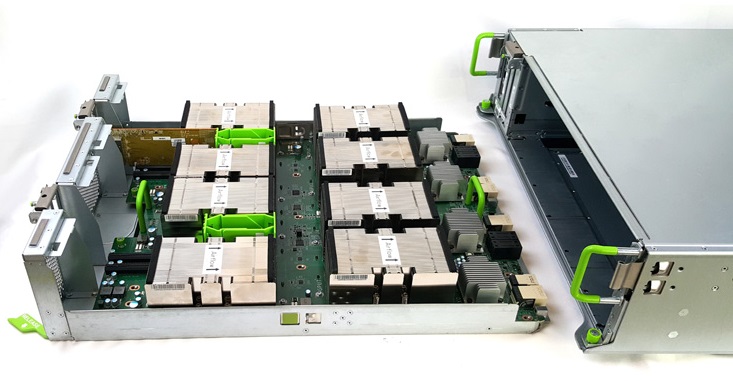

Microsoft’s OCP contribution is known as HGX-1. Its principle innovation is that it can dynamically serve up as many GPUs to a CPU-based host as it may need – well, up to eight, at least. It does this via four PCIe switches, an internal NVLink mesh network, plus a fabric manager to route the data through the appropriate connections. Up to four of the HGX-1 expansion boxes can be glued together for a total of 32 GPUs. Ingrasys, a Foxconn subsidiary will be the initial manufacturer of the HGX-1 chassis.

The Facebook version, which is called Big Basin, looks quite similar. Again, P100 devices are glued together vial an internal mesh, which they describe as similar to the design of the DGX-1, NVIDIA’s in-house server designed for AI research. A CPU server can be connected to the Big Basin chassis via one or more PCIe cable. Quanta Cloud Technology will initially manufacture the Big Basin servers.

Source: Facebook

Source: Facebook

Facebook said they were able to achieve a 100 percent performance improvement on ResNet50, an image classification model, using Big Basin, compared to its older Big Sur server, which uses the Maxwell-generation Tesla M40 GPUs. Besides image classification, Facebook will use the new boxes for other sorts deep learning training, such as text translation, speech recognition, and video classification, to name a few.

In Microsoft’s case, the HGX-1 appears to be the first of multiple OCP designs that will fall under its “Project Olympus” initiative, which the company unveiled last October. Essentially, Project Olympus is a related set of OCP hardware building blocks for cloud hardware. Although HGX-1 is suitable for many compute-intensive workloads, Microsoft is promoting it for artificial intelligence work, calling it the Project Olympus “hyperscale GPU accelerator chassis for AI,” according to a blog posted by Azure Hardware Infrastructure GM Kushagra Vaid.

Vaid also set the stage for what will probably become other Project Olympus OCP designs, hinting at future platforms that will include the upcoming Intel “Skylake” Xeon and AMD “Naples” processors. He also left open the possibility that Intel FPGAs or Nervana accelerators could work their way into some of these designs.

In addition, Vail brought up the possibility of a ARM-based OCP server via the company’s engagement with chipmaker Cavium. The software maker has already announced it’s using Qualcomm’s new ARM chip, the Centriq 2400, in Azure instances. Clearly, Microsoft is keeping its cloud options open.