Oct. 24, 2016

By: m

IT giant Fujitsu has been developing a series of in-house technologies aimed at the burgeoning market of artificial intelligence and machine learning. Although the company has made less fanfare of its ambitions in this regard than companies like IBM, Google and Microsoft, the Japanese multinational seems intent on expanding its datacenter business into this new high-value segment.

The step-up in AI focus has been especially noticeable over the past several months, where hardly week went by without an announcement of a new technology or use case. In fact, Fujitsu has issued no less than 15 press releases on AI or machine learning since the beginning of 2016. Most are the result of technologies developed at Fujitsu Laboratories. The lab is the company’s independent research arm that employs over 1,400 people worldwide and has an annual budget of about $300 million.

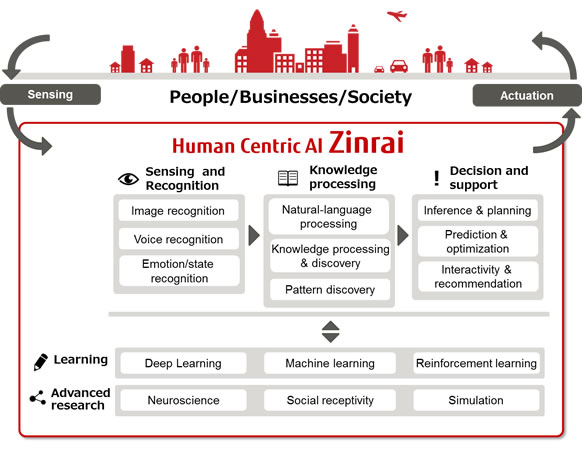

Some of this R&D work will end up in Fujitsu’s Human Centric AI Zinrai, the company’s custom framework that encapsulates multiple areas of artificial intelligence in order to support decision-making. (Zinrai, by the way, is a Japanese proverb that means as fast as lightning.) The underlying approach is to incorporate different component technologies like deep learning, visual recognition, and natural language processing into a coherent framework that can be used in commercial solutions and services.

Image: Fujitsu

Image: Fujitsu

As we noted, most of the R&D effort for AI is being done under Fujitsu Laboratories, which forms the tech incubator for technologies to be commercialized later for Fujitsu Ltd. We recap some of these latest developments below.

One of the more compelling efforts at the lab has to do with a collaboration with University of Toronto regarding the creation of a new FPGA-powered architecture that can solve some of the same sorts of problems as a quantum computer, in particular, combinatorial optimization problems. Last week, the lab announced it had built a demonstration system, which according to the researchers can perform 10,000 times faster than a conventional computer running simulated quantum annealing software.

At the heart of this technology is an FPGA optimization circuit that uses probability theory to quickly search for paths to optimal states. If commercialized, such a system could be used to formulate economic and social policies, optimize investment portfolios, improve transportation routing, and, enhance inventory management, just to name a few application areas. The lab hopes to deliver a working prototype by FY 2018.

Fujitsu Laboratories is also developing a neural network technology that is said to greatly improve GPU memory efficiency. The idea here is to optimize the limited capacity of the on-board graphics memory in order to speed up the training of deep learning neural networks. According to the announcement, using the popular AlexNet and VGGNet image recognition networks, the software “achieved reductions in memory usage volume of over 40% compared with before the application of this technology, enabling the scale of learning on a neural network for each GPU to be increased by up to roughly two times.” The company intends to incorporate the software into the Human Centric AI Zinrai in March 2017.

That work is destined to be paired with another GPU effort at the lab to scale out deep learning software, in order to take advantage of a large number of graphics processors. The technology incorporates supercomputer software that performs communications and operations in parallel, in addition to a new technique that changes processing methods based on the size of shared data and the sequence of deep learning processing. The lab exercised the technology on AlexNet, where it demonstrated learning speedups of 14.7x and 27x, using 16 and 64 GPUs, respectively. The researchers claim that these are “the world's fastest processing speeds, representing an improvement in learning speeds of 46% for 16 GPUs and 71% for 64 GPUs.” As with the GPU memory optimization work, the company plans to incorporate this technology into the Human Centric AI Zinrai framework.

Turning to the application realm, Fujitsu Laboratories is building a Japanese natural language processing system aimed at customer service support. The new technology is said to extract the relationships between words in order to grapple with the challenge of multiple meanings, ambiguities, and other idiosyncrasies of the Japanese language. The researchers claim the customer support software they developed can autonomously conduct dialogue for this application area. It has conducted a couple of field trials with two different companies and is preparing to hand the technology over for commercial use later this year. Over the last 10 months, Fujitsu has also unveiled other AI application development in traffic control, genomic analysis, and neuroscience.

At this point, Fujitsu’s AI activities seem less of a strategy, that a series of tactical moves. There is nothing on par with IBM’s Watson and its related cognitive computing services, Google’s comprehensive machine learning capabilities, or Microsoft’s new FPGA-powered “supercomputer” aimed at AI in the cloud. The closest thing Fujitsu has in this regard is the aforementioned Human Centric AI Zinrai framework, where the company is collecting a number of their machine learning technologies.

Whether this coalesces into a critical mass remains to be seen. In the meantime, Fujitsu is devoting plenty of resources into developing these technologies and collaborating with others, as needed, in an effort to get up to speed. In truth, it has little choice. If you want to be a global IT player in the 21st century, leaving AI to your competition is not an option.