July 12, 2017

By: Michael Feldman

After already shipping more than half a million of its next-generation Xeon products to customers, Intel officially launched its new Xeon scalable processor product line. The chipmaker is calling it the “biggest data center advancement in a decade.”

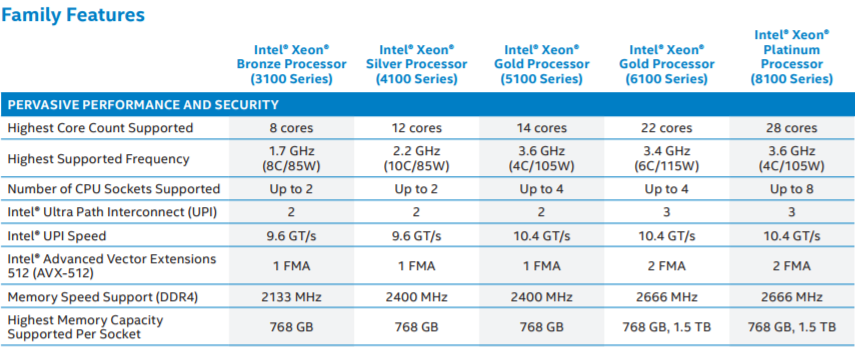

The feature set and broad contours of these new chips, which were previously code-named “Skylake,” have been known for some time. Back in May, we found out the new processors would be offered in four categories – Platinum, Gold, Silver, and Bronze -- which generally represent decreasing core counts, performance, and capabilities as you progress through the metal monikers. This new hierarchy replaces the E3, E5, E7 categories of previous Xeon product lines.

Source: Intel

Source: Intel

Presiding over his first Xeon platform launch, Navin Shenoy, Intel’s new general manager of the Data Center Group, talked at length about what the company sees as the big growth opportunities for these latest Xeons: cloud computing, AI & analytics, and the new applications propelled by the transition from 4G to 5G wireless networks.

The first area, cloud computing, is where these datacenter processors are shipping in greatest volume these days. Shenoy noted that the number of Xeons being sold into cloud setups has doubled over the last three years. “We anticipate that nearly 50 percent of our Xeon shipments by the end of 2017 will be deployed into either public or private cloud infrastructure,” he said.

Some of these, no doubt, will be running high performance computing workloads, and although there was not much talk at the launch event devoted to HPC, the new processors have plenty to offer in this regard. In particular, the inclusion of 512-bit Advanced Vector Extensions (AVX-512) support and the integration of the Omni-Path interconnect on some of the Xeon models, are notable additions for the performance crowd. Up until now, both features were available only in Intel’s Xeon Phi products, processors that are designed exclusively for high-performance workloads

The AVX-512 provides 32 vector registers, each of which is 512 bits wide. These are twice the width of the 256-bit wide vectors available with the older AVX and AVX2 instruction set, which means performance can be doubled of many types of scientific simulations that rely heavily on floating point operations. AVX-512 also supports integer vectors, which can be applied to applications such as genomics analysis, virtual reality, and machine learning.

This feature can offer some serious speedups at the application level. Intel claimed a 1.72x performance boost on LAMMPS, a popular molecular dynamics code, when running on the 24-core Xeon Platinum 8168 processor, compared to the same code running on the previous generation Xeon E5-2697 v4 (“Broadwell”) chip. Overall, the company recorded an average 1.65x performance improvement across a range of datacenter workloads, which included applications like in-memory analytics (1.47x), video encoding (1.9x), OLTP database management (1.63x), and package inspection (1.64x).

The Linpack numbers are certainly impressive on the high-end SKUs. A server equipped with two 28-core Xeon Platinum 8180 processors, running at 2.5 GHz, turned in a result of 3007.8 gigaflops on the benchmark, which means each chip can deliver about 1.5 Linpack teraflops. That’s more than twice the performance of the original 32-core “Knights Ferry” Xeon Phi processor, and even better than the 50-core “Knights Corner” chip released in 2012. Even the current “Knights Landing” generation of Xeon Phi tops out at about 2 teraflops on Linpack using 72 cores. Keep in mind that the most expensive Knights Landing processor can be had for less than $3400 nowadays, while the Xeon Platinum 8180 is currently priced at $10,009. So if it’s cheap flops you’re after, and your codes scale to 60 cores or more, the choice becomes pretty obvious.

Intel is also promising better deep learning performance on the new silicon. The company says they are getting 2.2 times faster speeds on both training and inferencing neural networks compared to the previous generation products. Ultimately, the Xeon line is not meant to compete with Intel’s upcoming products specifically aimed at these workloads, but they can serve as a general-purpose platform for these applications where specialized infrastructure is not warranted or not obtainable.

The integration of the high-performance Omni-Path (OPA) fabric interface is another Xeon Phi feature that was slid into the new Xeons. It’s only available on some of the Platinum and Gold models, not the lesser Silver and Bronze processors, since these are unlikely to be used in HPC setups. OPA integration adds about $155 to the cost of the chip compared to its corresponding non-OPA SKU. Since a separate adapter retails for $958 and takes up 16 lanes of the processor’s PCIe budget, it’s a no-brainer to opt for the integrated version if you’re committed to Omni-Path. If you’re considering InfiniBand as well, that decision becomes trickier.

Source: Intel

Source: Intel

Of the more than 500,000 Xeon scalable processors already shipped, about 8,600 of those went into three TOP500 supercomputers. One of these is the new 6.2-petaflop MareNostrum 4 system that recently came online at the Barcelona Supercomputing Center. That system, built by Lenovo, is equipped with more than 6,000 24-core Xeon Platinum 8160 processors. The other two machines are powered by 20-core Xeon Gold chips: one, a 1.75-petaflop HPE machine running at BASF in Germany, and the other, a 717-teraflop system constructed by Intel and running in-house.

Other early customers include AT&T, Amazon, Commonwealth Bank, Thomas Reuters, Monefiore Health, and the Broad Institute, among others. The first customer for the new Xeons was Google, which started deploying them into their production cloud back in February. According to Google Platforms VP Bart Sano,, cloud customers are already using the silicon for applications like scientific modeling, engineering simulations, genomics research, finance, and machine learning. On these workloads, performance improvements of between 30 to 100 percent are being reported.

Prices for the new chips start at $213 for a six-core Bronze 3104 and extend all the way up to $13,011 for the 28-core Platinum 8180M processor. The fastest clock – 3.6 GHz – is found on the four-core Platinum 8156 chip. A complete list of available processors can be found here.