Aug. 24, 2016

By: Michael Feldman

IBM is looking to take a bigger slice out of Intel’s lucrative server business with Power9, the company’s latest and greatest processor for the datacenter. Scheduled for initial release in 2017, the Power9 promises more cores and a hefty performance boost compared to its Power8 predecessor. The new chip was described at the Hot Chips event, which took place in Silicon Valley this week.

The Power9 will end up in IBM’s own servers, and if the OpenPower gods are smiling, in servers built by other system vendors. Although none of these systems have been described in any detail, we already know that bushels of IBM Power9 chips will end up in Summit and Sierra, two 100-plus-petaflop supercomputers that the US Department of Energy will deploy in 2017-2018. In both cases, most of the FLOPS will be supplied by NVIDIA Volta GPUs, which will operate alongside IBM’s processors.

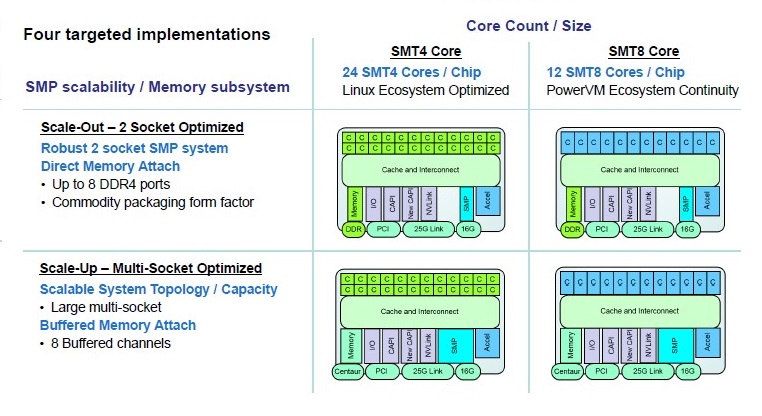

The Power9 will be offered in two flavors: one for single- or dual-socket servers for regular clusters, and the other for NUMA servers with four or more sockets, supporting much larger amounts of shared memory. IBM refers to the dual-socket version is as the scale-out (SO) design and the multi-socketed version as the scale-up (SU) design. They basically correspond to the Xeon E5 (EP) and Xeon E7 (EX) processor lines, although Intel is apparently going to unify those lines post-Broadwell.

The SU Power9 is aimed at mission-critical enterprise work and other application where large amounts of shared memory are desired. It has extra RAS features, buffered memory, and will tend to have fewer cores running at faster clock rates. As such, it carries on many of the traditions of the Power architecture through Power8. The SU Power9 is going to be released in 2018, well after the SO version hits the streets.

The SO Power9 is going after the Xeon dual-socket server market in a more straightforward manner. These chips will use direct attached memory (DDR4) with commodity DIMMs, instead of the buffered memory setup mentioned above. In general, this processor will adhere to commodity packaging so that Power9-based servers can utilize industry standard componentry. This is the platform destined for large cloud infrastructure and general enterprise computing, as well as HPC setups. It’s due for release sometime next year.

Distilling out the differences between the two varieties, here are the basics of the new Power9 (Power8 specs in parentheses for comparison):

- 8 billion transistors (4.2 billion)

- Up to 24 cores (Up to 12 cores)

- Manufactured using 14nm FinFET (22nm SOI)

- Supports PCIe Gen4 (PCIe Gen3)

- 120 MB shared L3 cache (96 MB shared L3 cache)

- 4-way and 8-way simultaneous multithreading (8-way simultaneous multithreading)

- Memory bandwidth of 120 or 230 GB/sec (230 GB/sec)

From the looks of things, IBM spent most of the extra transistor budget it got from the 14nm shrink on extra cores and a little bit more L3 cache. New on-chip data links were also added, with an aggregate bandwidth of 7 TB/sec, which is used to feed each core at the rate of 256 GB/sec in a 12-core configuration. The bandwidth fans out in the other direction to supply data to memory, additional Power9 sockets, PCIe devices, and accelerators. Speaking of which, there is special support for NVIDIA GPUs in the form of NVLink 2.0 support, which promises much faster communication speeds than vanilla PCIe. An enhanced CAPI interface is also supported for accelerators that support that standard.

The accelerator story is one of the key themes of the Power9, which IBM is touting as “the premier platform for accelerated computing.” In that sense, IBM is taking a different tack than Intel, which is bringing accelerator technology on-chip and making discrete products out of them, as it has done with Xeon Phi and is in the process of doing with Altera FPGAs. By contrast, IBM has settled on the host-coprocessor model of acceleration, which offloads special-purpose processing to external devices. This has the advantage of flexibility; the Power9 can connect to virtually any type of accelerator or special-purpose coprocessor as long it speaks PCIe, CAPI or NVLink.

Thus the Power9 sticks with an essentially general-purpose design. As a standalone processor it is designed for mainstream datacenter applications (assuming that phrase has meaning anymore). From the perspective of floating point performance, it is about 50 percent faster than Power8, but that doesn’t make it an HPC chip, and in fact, even a mid-range Broadwell Xeon (E5-2600 V4) would likely outrun a high-end Power9 processor on Linpack. Which is fine. That’s what the GPUs and NVLink support are for.

If there is any deviation from the general-purpose theme, it’s in the direction of data-intensive workloads, especially analytics, business intelligence, and the broad category of “cognitive computing” that IBM is so fond of talking about. Here the Power processors have had something of an historical advantage in that they offered much higher memory bandwidth that their Xeon counterparts, in fact, about two to four times higher. The SO Power9 supports 120 GB/sec of memory bandwidth; the SU version, 230 GB/sec. The Power9 also comes with a very large (120 MB) L3 cache, which is built with eDRAM technology that supports speeds of up to 256 GB/sec. All of which serves to greatly lessen the memory bottleneck for data-intensive applications.

According to IBM, Power9 was about 2.2 times faster for graph analytics workloads and about 1.9 times faster for business intelligence workloads. That’s on a per socket basis, comparing a 12-core Power9 to that of a 12-core Power8 at the same 4GHz clock frequency. Which is a pretty impressive performance bump from one generation to the next, although it should be pointed out that IBM offered no comparisons against the latest Broadwell Xeon chips.

But IBM needn’t worry too much about that. When the Power9 chips start rolling off the fabs next year, they will be facing competition from Intel’s next-generation Skylake Xeons. Skylake is a new microarchitecture and is shaping up to include some big new features, including a new memory architecture (3D XPoint?) and an integrated network fabric. AMD should certainly have its 32-core Zen server processor in the field by then as well, offering another x86 competitor to the Power9. ARM server vendors like Cavium, Applied Micro, Qualcomm, Marvell, Broadcom, and Phytium are also eyeing the server market and will be putting new silicon into the field over the next couple of years. Which is just a long way of saying that IBM has its work cut out for it.