March 6, 2017

By: Michael Feldman

IBM has revealed its intentions to commercial its quantum computing technology being developed under its research division. Although the company didn’t offer a definitive timeline or even a roadmap for the product set, it set down some markers on what such an endeavor would entail.

In a nutshell, IBM plans to build systems on the order of 50 qubits in “the next few years” and make them commercially available as part of its cloud offering. These “IBM Q” machines will be universal quantum computers, rather than the kinds of quantum annealing systems that D-Wave offers today. As such, they promise to be much more powerful and have a wider application scope. At 50 qubits, they should be able to perform some types of computation that would be impossible to do on a classical system of any size. In general, those are problems where the solution space encompasses so many possibilities that good old binary digits don’t offer much help. Some of the most notable commercial application areas include drug discovery, financial services, artificial intelligence, computer security, materials discovery, and supply chain logistics.

In a nutshell, IBM plans to build systems on the order of 50 qubits in “the next few years” and make them commercially available as part of its cloud offering. These “IBM Q” machines will be universal quantum computers, rather than the kinds of quantum annealing systems that D-Wave offers today. As such, they promise to be much more powerful and have a wider application scope. At 50 qubits, they should be able to perform some types of computation that would be impossible to do on a classical system of any size. In general, those are problems where the solution space encompasses so many possibilities that good old binary digits don’t offer much help. Some of the most notable commercial application areas include drug discovery, financial services, artificial intelligence, computer security, materials discovery, and supply chain logistics.

For IBM, this represents the second step for an effort that began last May, when the company made its five-qubit platform freely available to the public via the company’s cloud. Such accessibility attracted more than 40,000 users, who in aggregate, have run over 275,000 quantum computing experiments on the device. A number of courses and research studies have been developed around the platform at various institutions including the Massachusetts Institute of Technology (MIT) in the US, the University of Waterloo in Canada, and École polytechnique fédérale de Lausanne (EPFL) in Switzerland.

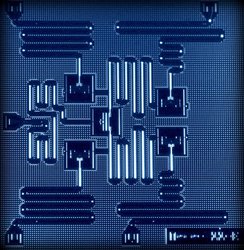

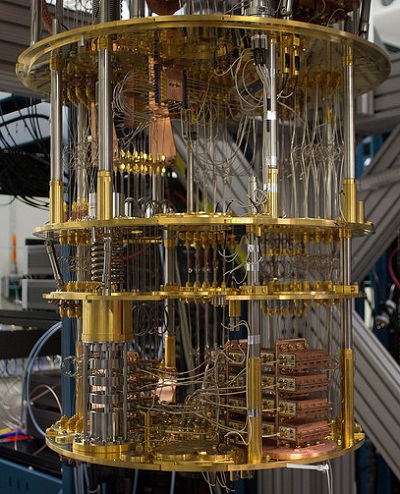

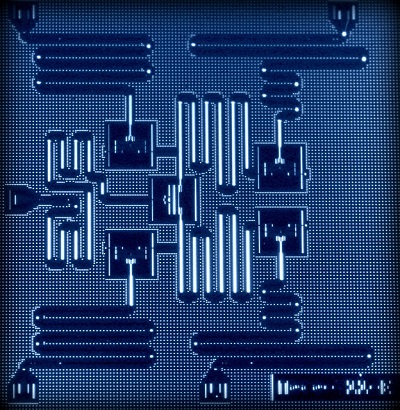

That early system has relatively few qubits and is not able to beat a classical computer at anything meaningful, but it has been able to demonstrate the potential of the technology. It’s based on IBM’s current quantum computing platform, which uses superconducting circuitry developed in-house and manufactured in their own fabs. The technology is silicon-based, but incorporates niobium as well.

According to Dave Turek, Vice President of Exascale Systems at IBM, the research division is toying with at least six more variants of the technology, and most of these experiments are already sporting more than five qubits. Turek says they are trying to get a feel for the interactions between the various underlying materials, interconnect topologies and other features that make such a system useful and stable.

There are several factors that you have to manage simultaneously to increase the size of the system. “Just producing qubits is actually a pretty trivial thing to do at this stage for us,” explained Turek. “But to produce them in a context where you are demonstrating entanglement and preserving coherence and scalability – therein lies the trick.”

A big part of that trick is ensuring the “universality” of the platform. IBM is intent on this aspect and wants to make sure everyone knows they are not offering something akin to a D-Wave quantum annealer. To drive that point home, they’ve developed the metric of “quantum volume,” which is essentially a way of measuring the quantum-ness of a computing system. It incorporates not just the number of qubits, but also the interconnectivity in the device, the number of operations than can be run in parallel, and the number of gates that can be applied before errors make the device behave essentially classically. Whether this will catch on as the Linpack of quantum computing remains to be seen.

Setting aside the business roadmap for a moment, the company also released a new API for the initial cloud-based system. It promises to help developers more easily exploit the technology without having to know the intricacies of quantum physics. In concert with the new API is an upgraded simulator, which can model a device with up to 20 qubits. Although, you won’t get the performance of real hardware, it will allow developers to play with problems that don’t fit in a five-qubit system. Those two additions just touch on how IBM has been building out the software ecosystem over the past year or so. You can get a more complete picture by visiting the company’s quantum computing programming webpage.

Although IBM made no mention about how their IBM Q products would be positioned relative to their traditional system offerings, Turek did speculate that early versions could be employed as accelerators to classical systems, where offloading certain algorithms onto the qubits made sense. Certainly, IBM has some experience with this model inasmuch it employs NVIDIA GPUs as floating point accelerators in its own Power servers today. At least in the short run, Turek thinks it’s likely that these quantum systems will be as an adjunct to conventional HPC machines to do quantum computations.

The analogy breaks down a bit when you realize the GPUs are just faster than their host processors – by one to three orders of magnitude, at most -- for certain types of computations, whereas quantum processors will be able to execute algorithms that will not run on a classical host in any reasonable amount of time. That’s motivation enough for IBM to keep this technology in-house.

The analogy breaks down a bit when you realize the GPUs are just faster than their host processors – by one to three orders of magnitude, at most -- for certain types of computations, whereas quantum processors will be able to execute algorithms that will not run on a classical host in any reasonable amount of time. That’s motivation enough for IBM to keep this technology in-house.

In fact, the company sees quantum computing as one the major technology pillars of its future, alongside its Watson and blockchain products in terms of strategic importance. The biggest challenge for IBM, as always, will be the competition. Setting aside D-Wave, there are perhaps 10 to 20 quantum projects that could be fairly close to a commercial release. They come from rivals as diverse as Google, Microsoft and Intel.

IBM is as well positioned as any of these. It’s been working on the problem for nearly four decades and has accumulated expertise in all the adjacent areas along the way – chip technology, superconductivity, applications domains, and system software. It’s narrowed its focus on the most promising technologies and thrown the less promising ones over the side. And now they are at the point where, as Turek says, “we can see the horizon.”

Images: Cloud-based experimental system; Five-qubit chip. Source: IBM.