April 25, 2017

By: Michael Feldman

A 30-node cluster of GPU-accelerated IBM “Minsky” servers has performed a billion-cell petroleum reservoir simulation in 92 minutes. Previous attempts at this scale required 20 hours using a large supercomputer with thousands of processors.

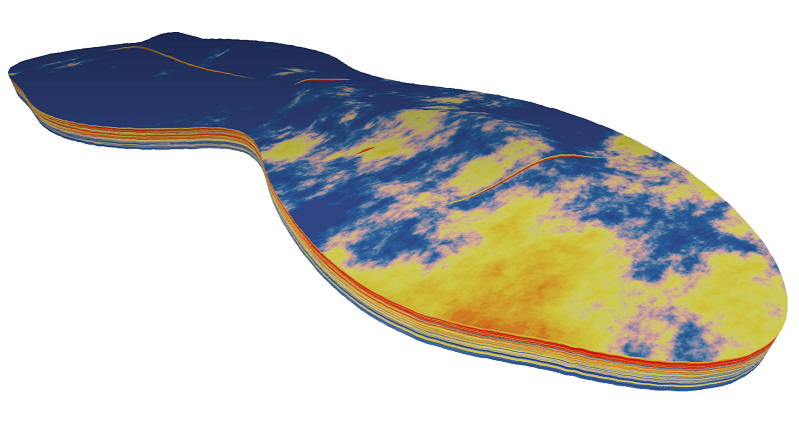

Source: IBM

Source: IBM

Oil and gas companies rely on reservoir simulations to help figure out the most productive spots to drill. But since these simulations are so computationally demanding, typical runs use 10 to 100 million cells, although Saudi Aramco managed to achieve a trillion-cell reservoir simulation last year using its Cray XC 40 supercomputer.

Nonetheless, a billion-cell simulation is rarely practical in most HPC setups at oil and gas companies. However, the level of resolution provided by such a detailed model can help lower production costs and environmental risks, allowing drillers to extract more oil from a given reservoir.

The billion-cell run took advantage of the latest P100 Tesla GPUs from NVIDIA. To make the most of these devices, the simulation was performed using ECHELON, a petroleum reservoir simulation package from Stone Ridge Technology that is highly optimized for GPU acceleration.

Stone Ridge Technology president Vincent Natoli noted that the fast turnaround time now possible for such a detailed simulation will enable reservoir engineers to run more models, making oil drilling and production more efficient. “By increasing compute performance and efficiency by more than an order of magnitude, we’re democratizing HPC for the reservoir simulation community,” said Natoli.

The Minsky servers used for the billion-cell proof point were outfitted with 60 Power8 CPUs and 120 NVIDIA P100 GPUs. And although the system comprised just 30 nodes, the GPUs supplied about 600 teraflops of peak performance, which is on par with that of a much larger supercomputer utilizing only CPUs.

As a result of the energy efficiency and computational density of the P100 GPUs, the system ran the simulation using just 1/10 the power and 1/100 the space of previous attempts at billion-cell runs. According to Sumit Gupta, IBM Vice President, High Performance Computing & Analytics, one recent effort at this scale employed 700,000 processors on a supercomputer that occupies the space equivalent to almost half a football field. “Stone Ridge did this calculation on two racks of IBM machines that could fit in the space of half a ping-pong table,” he said.