July 14, 2017

By: Michael Feldman

WekaIO, a startup offering a cloud-based storage platform that can support exabytes of data in single namespace, emerged from stealth earlier this week. The company is touting the new product as “the world’s fastest distributed file system.”

Founded by Liran Zvibel, Omri Palmon, and Maor Ben-Dayan in 2013, WekaIO is headquartered in San Jose, California, and does its engineering there and in Tel Aviv, Israel. The startup has attracted more than $32 million in venture capital from Qualcomm, Walden Riverwood, Gemini Israel, and Norwest Venture Partners, in addition to a number of individual investors.

Founded by Liran Zvibel, Omri Palmon, and Maor Ben-Dayan in 2013, WekaIO is headquartered in San Jose, California, and does its engineering there and in Tel Aviv, Israel. The startup has attracted more than $32 million in venture capital from Qualcomm, Walden Riverwood, Gemini Israel, and Norwest Venture Partners, in addition to a number of individual investors.

The cloud-based software platform, known as Matrix, is aimed at a wide swath of performance-demanding workloads, such as financial modeling, media rendering, engineering, bioinformatics, scientific simulations, and data analytics, as well as a variety of web applications. It is currently deployed at two major media and entertainment studios and TGen, a genomics research company focused on human health concerns.

“TGen is dedicated to the next revolution in precision medicine—with the goal of better patient outcomes driving our core principles,” said Nelson Kick, manager of HPC operations at TGen. “Future-thinking companies like WekaIO, complement our core principle of accelerating research and discovery. The ability to run more concurrent high performance genomic workloads will significantly advance our time to discovery.”

WekaIO essentially works by aggregating local storage across a traditional x86-based compute cluster or server farm. It relies on local SSD storage to deliver performance, but the specific amounts and types of flash devices are up to the customer. In general, the working data resides on the SSD, while cold data is auto-tiered to the cloud – either public or private – in an object store, like S3 or Swift.

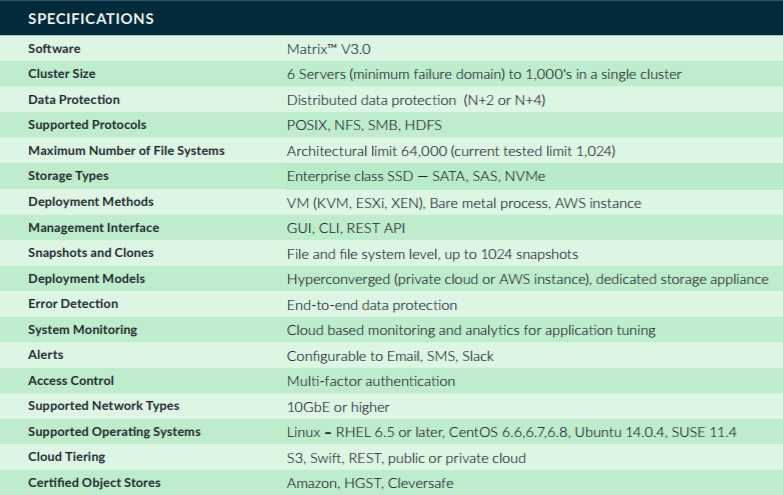

The Matrix software runs on one or two cores on each WekaIO host, so performance scales naturally as the size of the cluster grows. Each core delivers 30,000 IOPs and 400 MB/sec of throughput, and does so with less than 500 us of latency. Thus, a typical 100-node cluster can provide 3 million IOPS and 40 GB/sec to an application. The minimum cluster size is six servers, and it scales up from there to thousands of servers. Here are the specs taken from the Matrix datasheet:

Matrix’s closest competition is IBM’s Spectrum Scale (previously known as GPFS). According to WekaIO, measured with the SPEC SFS 2014 benchmark on AWS instances, Matrix servers processed four times the workload compared to those running Spectrum Scale. The WekaIO software was able to accomplish this using just 5 percent of the computational resources on those instances.

The following video describes the technical rationale for Matrix in more detail and highlights its architectural elements.

<iframe src="https://www.youtube.com/embed/GJe2n_Ao_lU?rel=0&controls=0" width="640" height="360" frameborder="0" allowfullscreen="allowfullscreen"></iframe>