Dec. 4, 2017

By: Michael Feldman

Google researchers have produced an enhanced version of AutoML that outperforms human programmers on two industrial-strength neural networks.

AutoML is a tool developed by the Google Brain team that automates the production of deep learning models. Basically, it sets up a “controller” neural network that proposes a model to be trained and evaluated for a given task. The evaluation is then fed back to the controller, which uses the information to propose a new model. The tool iterates through this process thousands of times until the accuracy of the results approaches something reasonable. It essentially automates the task human developers would have to do to design a deep learning model.

AutoML is a tool developed by the Google Brain team that automates the production of deep learning models. Basically, it sets up a “controller” neural network that proposes a model to be trained and evaluated for a given task. The evaluation is then fed back to the controller, which uses the information to propose a new model. The tool iterates through this process thousands of times until the accuracy of the results approaches something reasonable. It essentially automates the task human developers would have to do to design a deep learning model.

When it was unveiled in May, AutoML tried its luck on two well-known, but relatively small datasets: image recognition with CIFAR-10 and language modeling with Penn Treebank. The Google researchers found that the auto-generated models “achieve accuracies on par with state-of-art models designed by machine learning experts (including some on our own team!).”

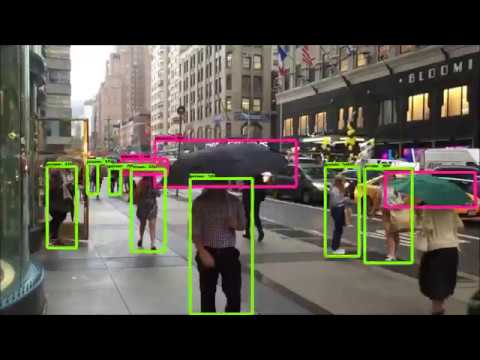

They subsequently decided they would try to apply the tool to large-scale datasets -- the kind that would be more commonly used for real applications. For this they chose ImageNet (image classification) and COCO (object detection), two datasets that are orders of magnitude larger than those of CIFAR-10 and Penn Treebank.

But since the original AutoML would require many months of training on such datasets, the Google team had to redesign the tool by deriving a second architecture, which they dubbed NASNet. On the ImageNet task, NASNet achieved a prediction accuracy of 82.7 percent, which was 1.2 percent better than all previous published results. It was also on par with the best ever reported ImageNet result, based on the SENet neural network. NASNet, however, was about twice as efficient as SENet from the standpoint of computational cost. By transferring the learned features from the ImageNet classification model to object detection, the Google team was also able to surpass previous published performance results on the COCO task.

The researchers suspect that the features learned by NASNet on ImageNet and COCO can be reused in many other computer vision applications, some of which can be applied to real-world applications, such as self-driving cars and security monitoring. To encourage further work along these lines, the researchers have open sourced the NASNet software so that others in the machine learning community can build upon their work.

For more technical detail on the work, check out the Google research note here.