Oct. 15, 2018

By: Michael Feldman

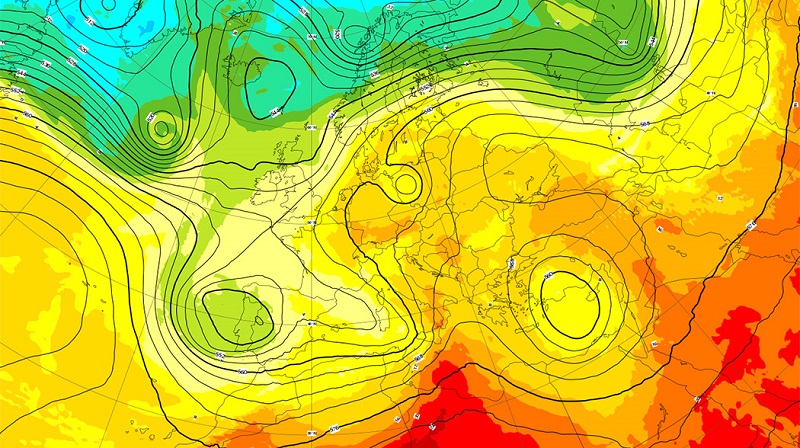

The European Centre for Medium-Range Weather Forecasts (ECMWF) and its partners have begun the second phase of an EU-funded project to develop extreme-scale computing for numerical weather prediction (NWP) and climate modeling.

The project, with the tongue-twisting name of the Energy-efficient Scalable Algorithms for Weather Prediction at Exascale, aka ESCAPE, is focused on moving existing European weather and climate codes to newer hardware platforms, especially those that are likely to be the basis of future exascale supercomputers. In the first phase of project, which kicked off in 2015, much of the effort revolved around rewriting the software for heterogenous architectures comprised of CPUs and GPUs, or other types of accelerators. Over the past decade, accelerators have become a critical element of many supercomputers, delivering both increased performance and superior energy efficiency compared to monolithic CPU-based system.

The project, with the tongue-twisting name of the Energy-efficient Scalable Algorithms for Weather Prediction at Exascale, aka ESCAPE, is focused on moving existing European weather and climate codes to newer hardware platforms, especially those that are likely to be the basis of future exascale supercomputers. In the first phase of project, which kicked off in 2015, much of the effort revolved around rewriting the software for heterogenous architectures comprised of CPUs and GPUs, or other types of accelerators. Over the past decade, accelerators have become a critical element of many supercomputers, delivering both increased performance and superior energy efficiency compared to monolithic CPU-based system.

Energy efficiency is a critical concern for these weather and climate codes, since supercomputing centers like ECMWF have a practical upper limit of about 20 MW. CPU-based technology is unlikely to offer anything close to this energy efficiency, so at least for the initial crop of exascale systems, much of the focus has turned to accelerators like GPUs to make up the shortfall.

Despite the use of these accelerators, the systems are also expected to be much larger than they are today. Within 10 years, developers are anticipating 100,000 to 1,000,000 processing elements will be used in supercomputer running global ensemble forecasts. This introduces the problem of fault tolerance and resilience, which can impact timely delivery of forecasts. An additional problem is that using energy-efficient software and hardware can reduce numerical accuracy and stability.

Increased demand for storage is yet another issue to be faced. In the weather forecasting game, exabyte data production could very well arrive before exaflops computing. That suggest these machines will have to deal with I/O bandwidth constraints, not to mention archiving issues. At least some of this can be addressed with more advanced data compression technology, but there’s probably no avoiding that fact that more of the HPC budget for these weather centers will have to be applied to storage infrastructure and relatively less for the computational elements.

The ESCAPE website sums up the challenges thusly:

“For computing, the key figure is the electric power consumption per floating point operation per second (Watts/FLOP/s), or even the power consumption per forecast, while for I/O it is the absolute data volume to archive and the bandwidth available for transferring the data to the archive during production, and dissemination to multiple users. Both aspects are subject to hard limits, i.e. capacity and cost of power, networks and storage, respectively.”

As previously mentioned, one of the main approaches developed by ESCAPE was to exploit more energy-efficient accelerators. In the first phase of the project, portions of the code were optimized for NVIDIA GPUs, as well as different types of Intel CPUs. Developers reported performance speed-ups of 10x to 50x on when the code was optimized for GPUs on a single node and an addition 2x to 3x when employing multiple GPUs connected via NVSwitch. Meanwhile efficiency gains of up to 40 percent were realized for CPUs. In addition, a new technique for performing Fourier transformation was also developed with an optical computing device (presumably using technology developed by Optalsys, one of ESCAPE’s phase 1 partners).

A key element of the phase one work was to organize the software into “Weather & Climate Dwarfs,” which represent the algorithmic building blocks of the applications. This divide-and-conquer approach allow developers to optimize the specific algorithms of the dwarfs, making most effective use of the available heterogeneous hardware. Plus, the production of a well-defined library for these applications will theoretically make future development more manageable as new supercomputing architectures are introduced.

Another focus area for ESCAPE is the use of domain-specific languages (DSL), which can increase developer productivity, while maintaining software portability. In some cases, these languages can even boost performance. Early tests with a DSL applied to a particular dwarf that computes atmospheric advection, demonstrated a 2x speed-up for GPU-accelerated code compared to manually adapted software.

The second phase of project, which will be called ESCAPE-2, is intended to build on the algorithmic work already done on the dwarfs and extend it to other models, like the German national meteorological service’s ICON model and the community ocean model NEMO. In addition, new benchmarks will be developed that represent the compute and data handling patterns of weather and climate models more realistically. These will be used to assess the performance of future HPC systems, with a particular eye toward the pre-exascale and exascale supercomputers being developed under the EuroHPC Joint Undertaking.

The ESCAPE-2 effort will also repurpose the uncertainty quantification platform, known as Uranie, which was developed by the French Atomic Energy Commission (CEA) for weather and climate codes. The idea here is to quantify the effect of data and compute uncertainties related to forecasting.

Other phase 2 work will revolve around improving time-to-solution and energy-to-solution, strengthening algorithm resilience against soft or hard failure, and applying machine learning technology.

To do all this, the EU has allocated EUR 4 million to fund the 12 ESCAPE-2 partners. The coordinating organization is once again the UK’s ECMWF, which is receiving over EUR 760 thousand for its lead role. The remainder of the funding will be being spread across the other 11 partners, which are comprised of HPC centers, meteorological and hydrological organizations, universities, and Bull SAS, the only commercial partner on the phase 2 effort.

The ESCAPE-2 effort was officially launched at a kickoff meeting at ECMWF in early October and is scheduled to run for three years.